What Are Inferential Statistics?

A Plain-Language Explainer – With Practical Examples 📑

By: Derek Jansen (MBA) | Expert Review By: Kerryn Warren (PhD) | Updated August 2025

TL;DR – Inferential Statistics 101

Inferential statistics help you test whether the patterns in your sample are likely to hold true in the wider population.

- T-tests compare the means of two groups to see if differences are significant.

- ANOVA compares the means of three or more groups at once.

- Chi-square tests assess relationships between categorical variables.

- Correlation measures the strength and direction of relationships between two variables.

- Regression uses one or more variables to predict another.

By using inferential statistics correctly, you can move from describing data (i.e. descriptive stats) to making credible, evidence-based conclusions.

If you’re new to quantitative data analysis, one of the many terms you’re likely to hear being thrown around is inferential statistics. In this post, we’ll provide an introduction to inferential stats, using straightforward language and loads of examples.

Overview: Inferential Statistics

What are inferential statistics?

At the simplest level, inferential statistics allow you to test whether the patterns you observe in a sample are likely to be present in the population – or whether they’re just a product of chance.

In stats-speak, this “Is it real or just by chance?” assessment is known as statistical significance. We won’t go down that rabbit hole in this post, but this ability to assess statistical significance means that inferential statistics can be used to test hypotheses and in some cases, they can even be used to make predictions.

That probably sounds rather conceptual – let’s look at a practical example.

Let’s say you surveyed 100 people (this would be your sample) in a specific city about their favourite type of food. Reviewing the data, you found that 70 people selected pizza (i.e., 70% of the sample). You could then use inferential statistics to test whether that number is just due to chance, or whether it is likely representative of preferences across the entire city (this would be your population).

PS – you’d use a chi-square test for this example, but we’ll get to that a little later.

Inferential vs Descriptive

At this point, you might be wondering how inferentials differ from descriptive statistics. At the simplest level, descriptive statistics summarise and organise the data you already have (your sample), making it easier to understand.

Inferential statistics, on the other hand, allow you to use your sample data to assess whether the patterns contained within it are likely to be present in the broader population, and potentially, to make predictions about that population.

It’s example time again…

Let’s imagine you’re undertaking a study that explores shoe brand preferences among men and women. If you just wanted to identify the proportions of those who prefer different brands, you’d only require descriptive statistics.

However, if you wanted to assess whether those proportions differ between genders in the broader population (and that the difference is not just down to chance), you’d need to utilise inferential statistics.

In short, descriptive statistics describe your sample, while inferential statistics help you understand whether the patterns in your sample are likely to reflect within the population.

Let’s look at some inferential tests

Now that we’ve defined inferential statistics and explained how it differs from descriptive statistics, let’s take a look at some of the most common tests within the inferential realm. It’s worth highlighting upfront that there are many different types of inferential tests and this is most certainly not a comprehensive list – just an introductory list to get you started.

T-tests

A t-test is a way to compare the means (averages) of two groups to see if they are meaningfully different, or if the difference is just by chance. In other words, to assess whether the difference is statistically significant. This is important because comparing two means side-by-side can be very misleading if one has a high variance and the other doesn’t (if this sounds like gibberish, check out our descriptive statistics post here).

As an example, you might use a t-test to see if there’s a statistically significant difference between the exam scores of two mathematics classes taught by different teachers. This might then lead you to infer that one teacher’s teaching method is more effective than the other.

It’s worth noting that there are a few different types of t-tests. In this example, we’re referring to the independent t-test, which compares the means of two groups, as opposed to the mean of one group at different times (i.e., a paired t-test). Each of these tests has its own set of assumptions and requirements, as do all of the tests we’ll discuss here – but we’ll save assumptions for another post!

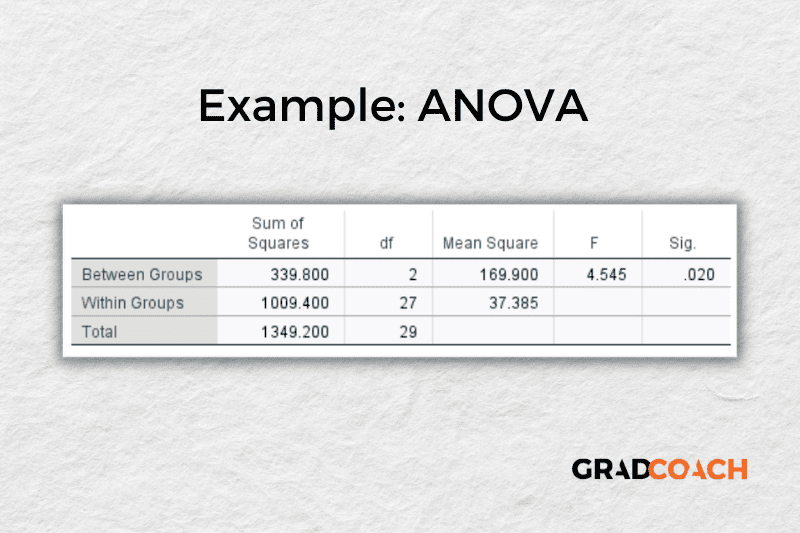

ANOVA

While a t-test compares the means of just two groups, an ANOVA (which stands for Analysis of Variance) can compare the means of more than two groups at once. Again, this helps you assess whether the differences in the means are statistically significant or simply a product of chance.

For example, if you want to know whether students’ test scores vary based on the type of school they attend – public, private, or homeschool – you could use ANOVA to compare the average standardised test scores of the three groups.

Similarly, you could use ANOVA to compare the average sales of a product across multiple stores. Based on this data, you could make an inference as to whether location is related to (affects) sales.

In these examples, we’re specifically referring to what’s called a one-way ANOVA, but as always, there are multiple types of ANOVAs for different applications. So, be sure to do your research before opting for any specific test.

Chi-square

While t-tests and ANOVAs test for differences in the means across groups, the Chi-square test is used to see if there’s a difference in the proportions of various categories. In stats speak, the Chi-square test assesses whether there’s a statistically significant relationship between two categorical variables (i.e., nominal or ordinal data). If you’re not familiar with these terms, check out our explainer video here.

As an example, you could use a Chi-square test to check if there’s a link between gender (e.g., male and female) and preference for a certain category of car (e.g., sedans or SUVs). Similarly, you could use this type of test to see if there’s a relationship between the type of breakfast people eat (cereal, toast, or nothing) and their university major (business, math or engineering).

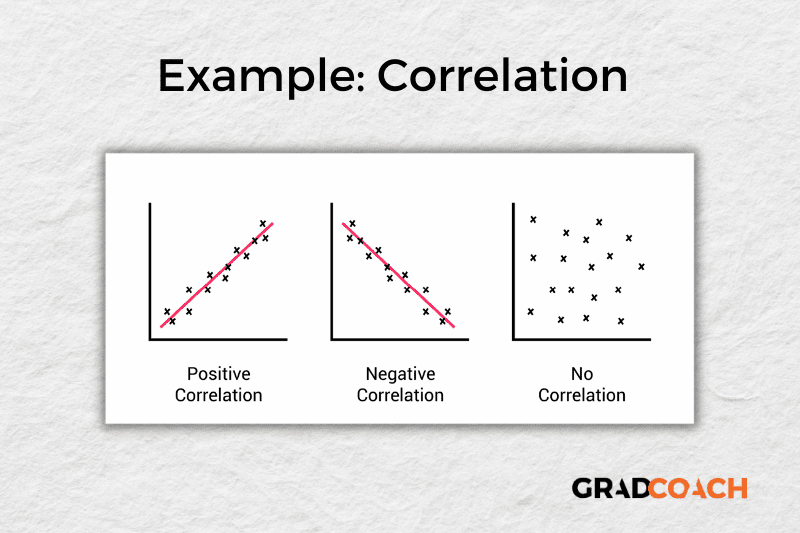

Correlation

Correlation analysis looks at the relationship between two numerical variables (like height or weight) to assess whether they “move together” in some way. In stats-speak, correlation assesses whether a statistically significant relationship exists between two variables that are interval or ratio in nature.

For example, you might find a correlation between hours spent studying and exam scores. This would suggest that generally, the more hours people spend studying, the higher their scores are likely to be.

Similarly, a correlation analysis may reveal a negative relationship between time spent watching TV and physical fitness (represented by VO2 max levels), where the more time spent in front of the television, the lower the physical fitness level.

When running a correlation analysis, you’ll be presented with a correlation coefficient (also known as an r-value), which is a number between -1 and 1. A value close to 1 means that the two variables move in the same direction, while a number close to -1 means that they move in opposite directions. A correlation value of zero means there’s no clear relationship between the two variables.

What’s important to highlight here is that while correlation analysis can help you understand how two variables are related, it doesn’t prove that one causes the other. As the adage goes, correlation is not causation.

Regression

While correlation allows you to see whether there’s a relationship between two numerical variables, regression takes it a step further by allowing you to make predictions about the value of one variable (called the dependent variable) based on the value of one or more other variables (called the independent variables).

For example, you could use regression analysis to predict house prices based on the number of bedrooms, location, and age of the house. The analysis would give you an equation that lets you plug in these factors to estimate a house’s price. Similarly, you could potentially use regression analysis to predict a person’s weight based on their height, age, and daily calorie intake.

It’s worth noting that in these examples, we’ve been talking about multiple regression, as there are multiple independent variables. While this is a popular form of regression, there are many others, including simple linear, logistic and multivariate. As always, be sure to do your research before selecting a specific statistical test.

As with correlation, keep in mind that regression analysis alone doesn’t prove causation. While it can show that variables are related and help you make predictions, it can’t prove that one variable causes another to change. Other factors that you haven’t included in your model could be influencing the results. To establish causation, you’d typically need a very specific research design that allows you to control all (or at least most) variables.

Let’s Recap

We’ve covered quite a bit of ground. Here’s a quick recap of the key takeaways:

- Inferential stats allow you to assess whether patterns in your sample are likely to be present in your population

- Some common inferential statistical tests include t-tests, ANOVA, chi-square, correlation and regression.

- Inferential statistics alone do not prove causation. To identify and measure causal relationships, you need a very specific research design.

If you’d like 1-on-1 help with your inferential statistics, check out our private coaching service, where we hold your hand throughout the quantitative research process.

Become A Methodology Wiz ✨

Should You Start Coding Your Interviews Before You’ve Finished Them?

Learn when to start analyzing your qualitative interview data and how to avoid biasing your research with premature coding.

How Do I Know If My Qualitative Themes Are Strong Enough – Or Even Real?

Learn how to build confidence in your qualitative themes by checking them against your research questions, finding patterns across participants, and being able to defend your choices.

What Are the Key Stages of Qualitative Analysis?

Learn the six essential stages of qualitative analysis: from recording interviews to selecting supporting quotes. A practical guide for postgraduate researchers.

Should You Ask All Interview Participants Exactly the Same Questions?

Consistency in interview questions is critical for research validity and IRB compliance. Learn why you must ask all participants the same core questions and how to structure your interviewing process correctly.

NVivo, Dedoose, MaxQDA – Do You Really Need Qualitative Analysis Software?

Wondering if you need specialized software for coding qualitative data? Learn when to use NVivo or Dedoose, and when a simple spreadsheet is enough.

very important content

Kindly share the slides on quantitative and qualitative data. I liked the videos. Thanks